Covid-19 has all too clearly shown how digital tools have become an integral part of work—be they used for online meetings or for the increasing surveillance and monitoring of workers at home or in the workplace. Indeed, as businesses try to mitigate the risks and get employees to come back to the workplace, they are introducing a vast array of applications and wearables.

In many cases, employees are left to accept these new surveillance technologies or risk losing their jobs. Covid-19 has thus expanded a power divide which was already growing, allowing the owners and managers of these technologies to extract, and capitalise upon, more and more data from workers.

Strong union responses are immediately required to balance out this power asymmetry and safeguard workers’ privacy and human rights. Improvements to collective agreements as well as to regulatory environments are urgently needed. Co-ordinated action is needed to defend workers’ rights to shape their working lives, free from the oppression of opaque algorithms and predictive analyses conducted by known and unknown firms.

The acclaimed author of The Age of Surveillance Capitalism, Shoshana Zuboff, is adamant we should make the trading of human futures illegal. Our politicians should act to do. In the meantime unions should immediately engage to limit the threats to workers’ rights, by negotiating the various phases of what I call the ‘data lifecycle at work’ and securing co-governance of data-generating and data-driven algorithmic systems. Wider adoption of such gains can be advanced through improvements to regulation.

Data lifecycle

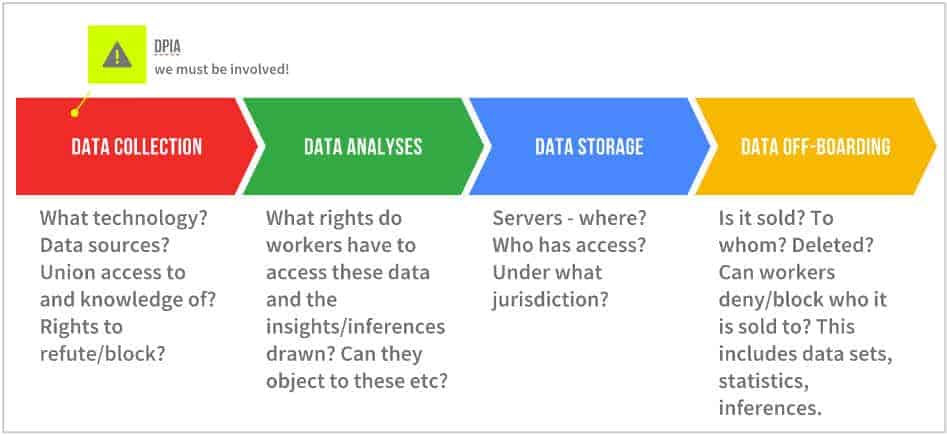

The figure below depicts the data lifecycle at work. Some of the demands under each of the four stages, though far from all, are covered for workers in Europe’s General Data Protection Regulation zone. For workers in most other jurisdictions, these rights—if negotiated—would be new.

The data-collection phase covers internal and external collection tools, the sources of the data, whether shop stewards and workers have been informed about the intended tools and whether they have the right to rebut or reject them. Much data extraction is hidden from the worker (or citizen) and management must be held accountable.

In the GDPR area, companies are obliged to conduct impact assessments (DPIAs) on the introduction of new technology likely to involve a high risk to others’ information. They are also obliged to consult the workers. Yet very few unions have access to, or even know about, these assessments—unions should claim their rights to be party to them.

In the data-analyses phase, until trading in human futures is banned, unions must cover the regulatory gaps which have been identified—namely the lack of rights with regard to the inferences (the profiles, the statistical probabilities) drawn from algorithmic systems. Workers should have greater insight into, and access to, these inferences and rights to rectify, block or even delete them.

Such inferences can be used to determine an optimal scheduling, wages (if linked to performance metrics) or, in human resources, whom to hire, promote or fire. They can be used to predict behaviour based on historic patterns, emotional and/or activity data. Access to the inferences is key to the empowerment of workers and indeed to human rights. Without these rights, there will be few checks and balances on management’s use of algorithmic systems or on data-generated discrimination and bias.

The data-storage phase is important but will become more so if e-commerce negotiations on the ‘free flow of data’, within, and on the fringes of, the World Trade Organization, are actualised. This would entail data being moved across borders to what we can expect would be areas of least privacy protection. They would then be used, sold, rebundled and sold again in whatever way corporations saw fit.

The 2020 European Court of Justice ruling invalidating the EU-US Privacy Shield can be seen as a slap in the face for proponents of the unrestricted flow of data but the demand is still on the table. If it were to be realised, and workers had not secured much better data rights via national law or collective agreements, their access to and control over these data would be weaker still.

Unions must also be vigilant in the data off-boarding phase. This refers to the deletion of data but also the sale and transfer of data sets, with associated inferences and profiles, to third parties. Unions should negotiate much better rights to know what is being off-boarded and to whom, with scope to object to or even block the process—this is hugely important in light of the e-commerce trade negotiations. Equally, unions should as a minimum have the right to request that data sets and inferences are deleted when their original purpose has been fulfilled, in line with the principle of data minimisation recognised in the GDPR (article 5.1c).

Seat at the table

Negotiating these rights is intrinsically linked to the next vital goal—mandatory co-governance of algorithmic systems at work. Many shop stewards are unaware of the systems in place in their companies. But management too is often ignorant of their details and fails to understand their risks and challenges, as well as potentials. To fulfil the European Union’s push for human-in-command oversight, unions must have a seat at the table in the governance of such systems.

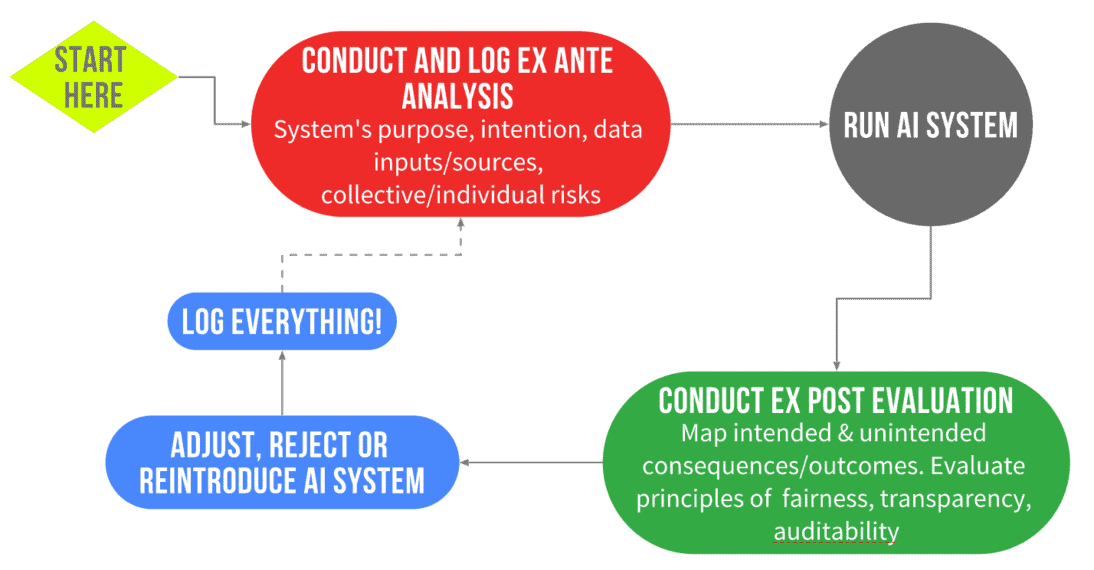

The figure below depicts a co-governance model, which can be adapted to particular national industrial-relations systems and structures, such as a works council.

Shop stewards must be party to the ex-ante and, importantly, the ex-post evaluations of an algorithmic system. Is it fulfilling its purpose? Is it biased? If so, how can the parties mitigate this bias? What are the negotiated trade-offs? Is the system in compliance with laws and regulations? Both the predicted and realised outcomes must be logged for future reference. This model will serve to hold management accountable for the use of algorithmic systems and the steps they will take to reduce or, better, eradicate bias and discrimination.

The governance of algorithmic systems will require new structures, union capacity-building and management transparency. Without such changes, the risk of adverse effects of algorithmic systems, on workers’ rights and human rights, is simply too large. Under the GDPR, workers already have some rights which unions can utilise. In relation to the DPIAs mentioned above, unions must demand a role in their preparation and periodic re-evaluation. No employer can assess fairness unilaterally—what is fair for the employer is not necessarily fair for the workers.

Further, unions can co-ordinate on behalf of their members a Data Subject Access Request (DSAR), as stipulated in article 15. While the GDPR gives the individual these rights, there is nothing stopping a union co-ordinating requests in a given company. To fully benefit from such a coordinated approach, they should have access to legal and data analyses experts. (Note that inferences based on personal data are themselves treated as personal data if they allow the person to be identified, and so GDPR rights apply.)

Trade unions, especially within the GDPR zone, have a range of rights and tools to limit the threats to workers’ privacy and human rights. These should be utilised and urgently prioritised to prevent the further commodification of workers. Moving towards collective rights over, and co-regulation of, algorithmic systems is an important step in maintaining the power of unions. As the demand for digital tools to monitor and survey workers continues to rise, unions simply cannot afford not to give these issues utmost priority.

This is part of a series on the Transformation of Work supported by the Friedrich Ebert Stiftung